How to Use Load Balancing for Highly Available Applications?

TL;DR

- Load balancing distributes incoming requests across multiple servers to prevent any single server from becoming a bottleneck, enhancing availability and performance.

- Using modern software load balancers (e.g., NGINX, HAProxy, Varnish, LiteSpeed) supports both Layer 4 (network) and Layer 7 (application) traffic handling – ideal for web apps, APIs, and microservices.

- Load balancing helps auto-scale backend servers smoothly under traffic surges and ensures seamless failover if one node fails.

- For global services, cloud load balancing can distribute traffic regionally or worldwide, reduce latency, and enable disaster recovery through geo-redundancy.

- Combined with caching, SSL offload, and health-check mechanisms, load balancers improve user experience, resource usage, and resilience – making them essential for highly available applications in 2025–2026.

Load balancing is the process of efficiently directing network traffic and distributing workloads across multiple components. It is achieved through the use of specialized nodes known as load balancers. These load balancer nodes are automatically added within our platform when the application server scales up to ensure requests are evenly distributed across the backend systems. Moreover, you can manually incorporate and scale load balancer instances within the environment’s topology as needed.

Presently, the platform offers a selection of five pre-configured managed load balancer stacks to select from:

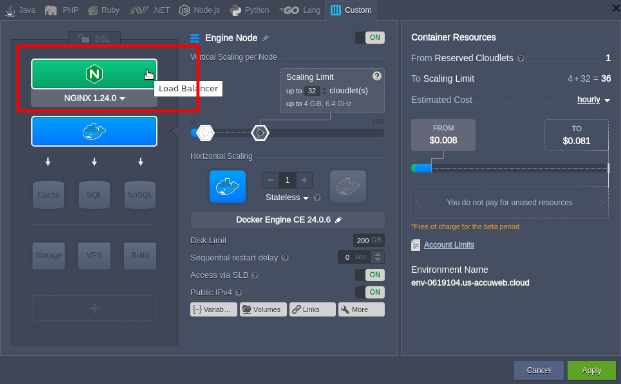

NGNIX

It is a well-known open-source server used around the world. Renowned for its exceptional performance, which ensures the efficiency of your applications. NGINX’s seamless integration sets it apart, eliminating additional setup or pre-configuration. With built-in Layer 7 load balancing and content caching, NGINX provides a cost-effective and exceedingly reliable solution for hosting applications. Its scalability, security features, and efficient resource management collectively establish a robust and dependable platform for hosting applications.

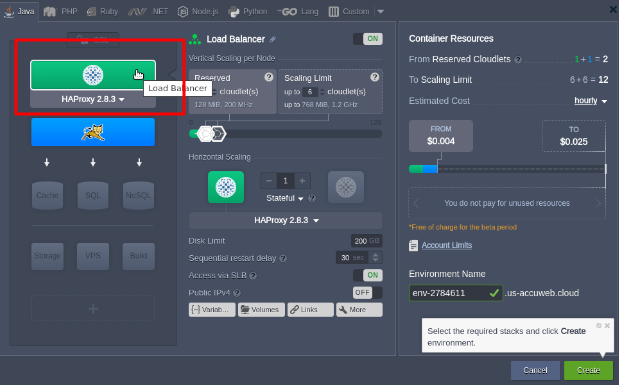

HAProxy

(High Availability Proxy) This open-source solution is known for its speed and reliability. It is well-suited to handle high traffic volumes while offering high availability, load balancing, and proxying capabilities for TCP and HTTP-based applications. It provides robust high availability, load balancing, and proxying for TCP and HTTP-based applications. Like the NGINX balancer, HAProxy operates on a single-process, event-driven request-handling model. Its efficient memory management allows it to handle multiple concurrent requests smoothly, ensuring seamless load balancing while implementing intelligent persistence and effective DDOS mitigation.

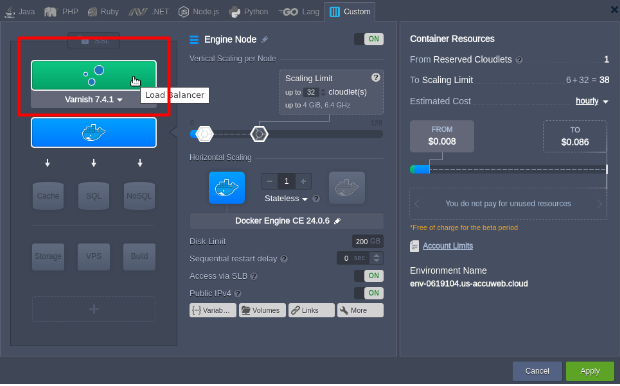

Varnish

Recognized as a caching HTTP reverse proxy, Varnish is a web application accelerator tailor-made for high-traffic dynamic websites. Its unique design was originally centered around optimizing HTTP. However, in our platform’s implementation, It is packaged alongside the NGINX server, operating as an HTTPS proxy. This setup allows it to manage data securely using the Custom SSL option. The primary focus remains on speed, primarily achieved through caching, significantly enhancing website performance by efficiently handling static content delivery.

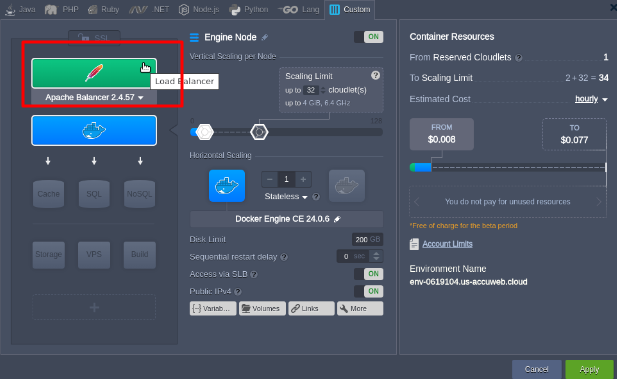

Apache

A load balancer is an open-source server for distributing traffic, offering extensive customization options thanks to its modular design. The Apache balancer can be customized to align with the unique requirements of any given environment, all while delivering advantages such as increased security, robust high availability, speed, reliability, and centralized authentication/authorization.

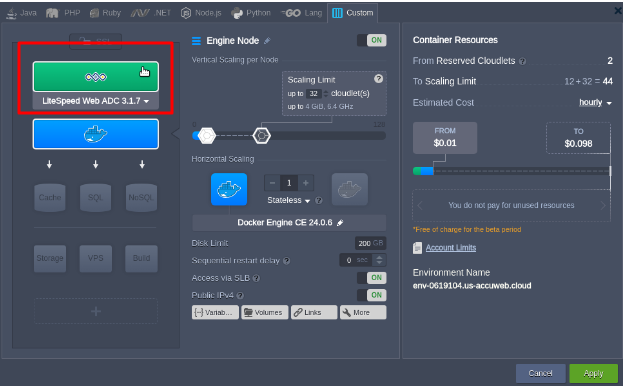

LiteSpeed Web ADC

LiteSpeed Web ADC (Application Delivery Controller) is a high-performance HTTP load-balancing solution for commercial use. It incorporates state-of-the-art technologies, including HTTP/3 and the QUIC transport protocol support, and advanced security features such as web application firewall protection and layer-7 anti-DDOS filtering. Additionally, it offers enterprise-level performance enhancements, including caching, acceleration, optimization, offloading, and much more.

The preferred strategy for production involves utilizing multiple compute nodes alongside a load balancer. This approach guarantees both redundancy and high availability of the system.

Backend Health Checks / Backend Status Checks

Every environment-level load balancer includes a default status check implementation to verify the accessibility and proper functioning of the backends. You can locate specific details in the list below:

NGINX- conducts a basic TCP validation (checking for the availability of the required server port) just before directing a user request to it. The next node within the same layer will be attempted in a failed check.

Frequent TCP evaluations, typically conducted at a default 2-second

HAProxy- interval involves saving the outcomes in a backend state table and ensuring its continual accuracy.

Apache Balancer – There isn’t a built-in default system monitoring procedure.

Varnish -Every backend is equipped with the following parameters in the balancer configurations (ensuring that the system assessments are conducted once per minute with a 30-second timeout):

probe = { .url = “/”; .timeout = 30s; .interval = 60s; .window = 5; .threshold = 2; } }

LiteSpeed ADC –The internal IP conducts a TCP validation every second, with a timeout of one second. The health assessment is automatically integrated as a default feature at the Worker Group level.

Jilesh Patadiya, the visionary Founder and Chief Technology Officer (CTO) behind AccuWeb.Cloud. Founder & CTO at AccuWebHosting.com. He shares his web hosting insights on the AccuWeb.Cloud blog. He mostly writes on the latest web hosting trends, WordPress, storage technologies, and Windows and Linux hosting platforms.